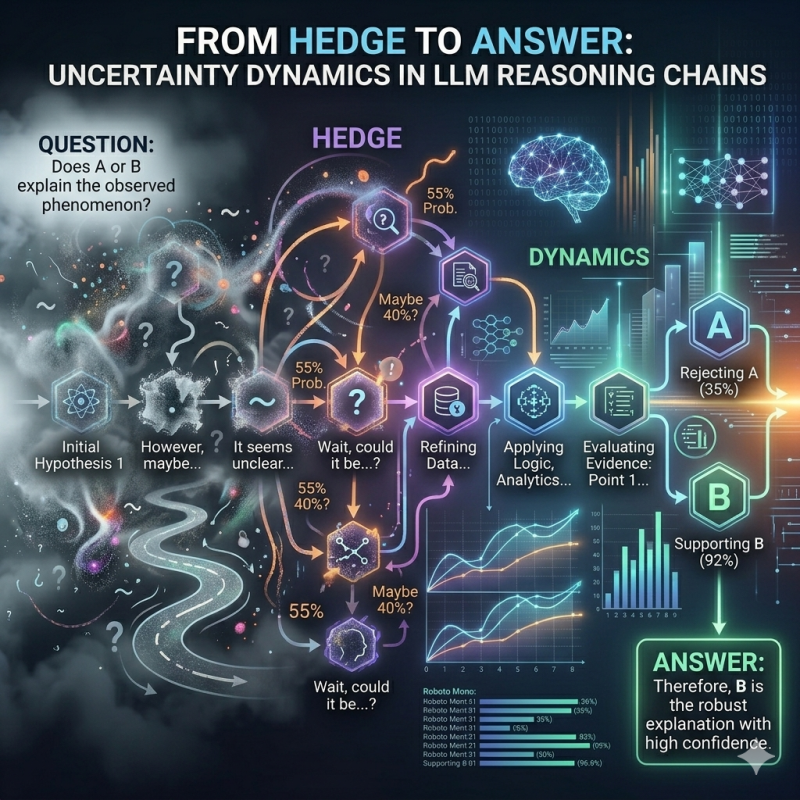

From Hedge to Answer: Uncertainty Dynamics in LLM Reasoning Chains

LLMs Are Lying About How Confident They Are (And We Can Prove It)

You know how ChatGPT always sounds so sure about everything? Turns out, behind the scenes, it's a lot more nervous than it lets on.

I spent some time digging into this by analyzing 208,000 sentences from real LLM reasoning chains — that "thinking" step some models show before they give you an answer. What I found was... kind of wild.

The Setup

There's a public dataset called real-slop with over 155,000 real conversations across a bunch of different AI models — Claude, GPT, Gemini, DeepSeek, Qwen, and more. About 11,000 of these include the model's internal reasoning trace alongside its final response. That's the good stuff.

I built a lexicon of uncertainty markers — words and phrases like "I think," "perhaps," "might," "probably," "approximately" — sorted into six categories. Then I ran every sentence through a spaCy-based detector to see where and how often models hedge during their reasoning.

Finding 1: The Uncertainty Arc

Here's the first surprise. Uncertainty isn't random. It follows a really clean pattern across the reasoning chain:

- Start of reasoning: barely any hedging (~2.4%)

- Middle of reasoning: hedging peaks hard (~6.9%)

- End of reasoning: drops back down (~4.3%)

It's an inverted-U. Every time. The model sets up the problem, freaks out a little in the middle while it's weighing options, then calms down and commits to an answer. It's weirdly... human? (I'm not saying LLMs think. But the pattern is funny.)

Finding 2: Every Model Does This

I expected different model families to behave differently. And they do — sort of. Some models hedge way more than others. Minimax and Qwen are the anxious ones, hedging in 15%+ of mid-chain sentences. Claude Opus and Gemini are the stoic types, barely cracking 5%.

But here's the thing: they all follow the same arc. Same inverted-U shape, just at different heights. The positional pattern is universal. It doesn't matter if the model is chatty or reserved — the dynamics of uncertainty are the same across the board.

That's kind of remarkable. It suggests this pattern isn't a quirk of any particular training recipe. It's just... how autoregressive reasoning works.

Finding 3: The Confidence Filter

This is the big one.

When models go from their internal reasoning to their final response, they suppress over half of their uncertainty expressions. The overall rate drops from 3.8% to 1.7%. That's a 57% reduction.

And it's not uniform. The most aggressively filtered category? Epistemic hedges — phrases like "I think" and "perhaps." These get nuked by 85%. The model literally thinks "I think this might be..." and then tells you "This is..."

Meanwhile, approximators ("approximately," "roughly") actually increase in the final response. Because saying "about 3 hours" is useful information, not a sign of weakness.

So the overconfidence problem everyone complains about with LLMs? It's at least partly a presentation issue. The model does express uncertainty — it just scrubs it out before you see it.

What This Means

A few takeaways:

For researchers: If you're trying to get better-calibrated LLMs, maybe don't start from scratch. The uncertainty signals are already there in reasoning traces. The trick might be to stop the model from filtering them out, rather than teaching it to generate them.

For users: When a model sounds 100% confident, it might have been 85% confident thirty seconds ago during its thinking step. If your model supports showing reasoning traces, read them. That's where the honesty lives.

For the vibes: LLMs have a little internal monologue where they go "hmm, maybe, I think, could be..." and then turn around and hit you with "The answer is X." We've all had that coworker.

The Boring Details

- Dataset: 155,623 interactions, 12 model families, 104 model variants

- Analysis: 208,156 sentences from 9,923 interactions with reasoning traces

- Validation: Cohen's kappa of 0.67 between the lexical detector and an LLM-based classifier

- Stats: Mixed-effects regression, Wilcoxon signed-rank tests, the whole nine yards

- All results are statistically significant at p < 0.001 (the confidence filtering effect clocks in at p < 10^-165, which is the statistical equivalent of "yeah, this is real")

The full analysis pipeline is reproducible — one make all and you get updated results, tables, and a compiled paper.