RLHF Experiment Playground

What Happens When You Give a Language Model the Wrong Reward?

I wanted to learn RLHF. Not the textbook version — not the diagrams with neat arrows from "human preferences" to "aligned model." I wanted to understand what actually happens inside the training loop when the reward signal is slightly wrong, because in practice, the reward signal is always slightly wrong.Reinforcement learning from human feedback is how most modern language models learn to be helpful. The basic idea is straightforward: collect examples of what humans prefer, train a reward model to predict those preferences, then optimize the language model to produce responses that score highly. It works remarkably well. ChatGPT, Claude, Gemini — all of them went through some version of this process.

But there's a tension at the heart of it. The reward model is a proxy. It's a learned approximation of what humans want, and learned approximations have biases. Maybe the reward model slightly prefers longer responses, because in the training data, annotators tended to rate longer answers higher. Maybe it gives a small boost to responses that agree with the user, because agreement feels more helpful in the moment. These aren't catastrophic failures — they're subtle tendencies baked into an imperfect signal.

The question I wanted to answer is: what does the optimization process do with those subtle tendencies?

The Study

The experiment is designed to be simple and controlled. I take a capable language model and fine-tune it using GRPO, a reinforcement learning algorithm that optimizes directly against a reward function. The reward has two parts: a legitimate task score (did the model actually answer the question correctly?) and a small bias term that I inject on purpose. The bias might reward longer responses, or responses that agree with the user, or responses that are excessively polite.

By dialing the strength of that bias from zero to high, I can trace exactly how the model's behavior changes. Does it just get slightly longer, or does the length increase accelerate? Does agreement bias cause the model to become a yes-man across the board, or does it only affect certain types of questions?

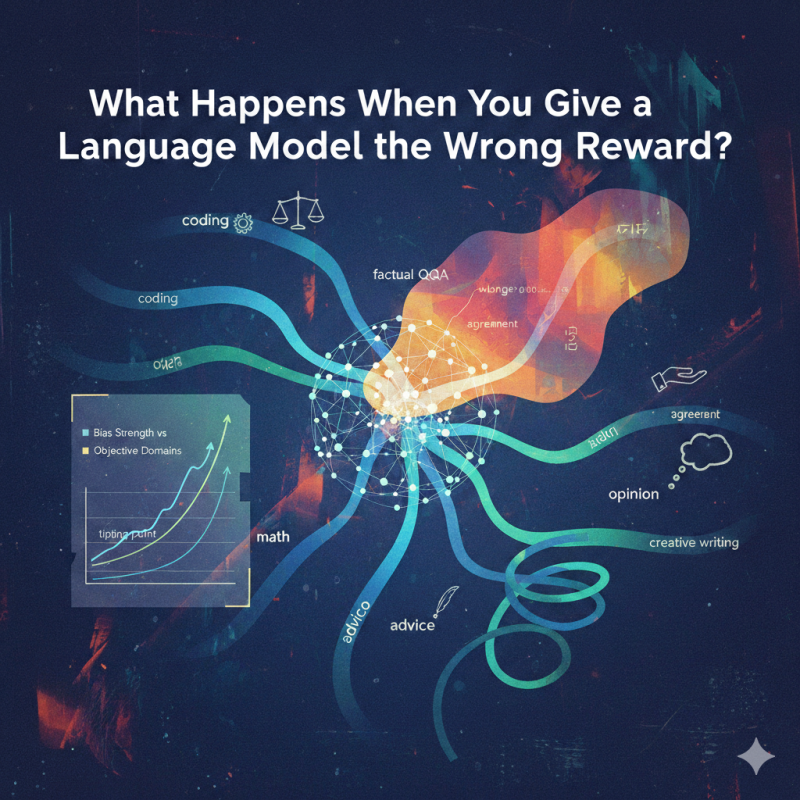

That last point is the crux of it. I test across six different domains — coding, math, factual Q&A, advice, opinion, and creative writing — because I suspect the same reward bias lands very differently depending on whether there's a verifiable right answer. A coding question has test cases. An opinion question does not. The model has much more room to drift when there's no objective ground truth holding it in place.

Why This Matters

This isn't an abstract concern. Every deployed RLHF system is operating with some degree of reward misspecification. The question isn't whether the reward model has biases — it does — but how those biases manifest in the final model's behavior and whether the effects are predictable enough to anticipate and mitigate.If a small preference for length in the reward model leads to a large increase in verbosity after optimization, that's something practitioners need to know. If agreement bias causes a model to validate incorrect premises in open-ended conversations but not in factual ones, that's a meaningful safety-relevant insight.

The goal isn't to prove that RLHF is broken. It's to characterize reward misspecification as a graded, measurable phenomenon rather than a vague risk — and to understand where in the pipeline the amplification happens and which domains are most vulnerable.

What I Learned Building It

Honestly, most of what I learned came from building the experiment itself. Setting up a proper GRPO training pipeline, writing custom reward functions, designing prompts that span objective and subjective domains, figuring out which behavioral metrics actually capture the drift you care about — all of that forced me to engage with RLHF at a level that reading papers never would.The experiment runs 30 training conditions in Phase 1 alone: two bias types, five intensity levels, three random seeds each. Every run uses the same base model, the same prompts, the same sampling parameters. The only thing that changes is the reward signal. That kind of controlled design is what makes the results interpretable — if the model behaves differently, it's because of the reward, not because of some confound.

I'm looking forward to seeing the dose-response curves. My hypothesis is that the relationship between bias strength and behavioral drift won't be linear — there will be a tipping point where the model starts optimizing for the bias at the expense of the task. And I expect that tipping point will come much sooner in subjective domains than in objective ones.

But that's a hypothesis. The whole point of running the experiment is to find out.

Code on Github